到目前為止,我們一直專注于定義由序列輸入、單個隱藏 RNN 層和輸出層組成的網絡。盡管在任何時間步長的輸入和相應的輸出之間只有一個隱藏層,但從某種意義上說這些網絡很深。第一個時間步的輸入會影響最后一個時間步的輸出T(通常是 100 或 1000 步之后)。這些輸入通過T在達到最終輸出之前循環層的應用。但是,我們通常還希望保留表達給定時間步長的輸入與同一時間步長的輸出之間復雜關系的能力。因此,我們經常構建不僅在時間方向上而且在輸入到輸出方向上都很深的 RNN。這正是我們在 MLP 和深度 CNN 的開發中已經遇到的深度概念。

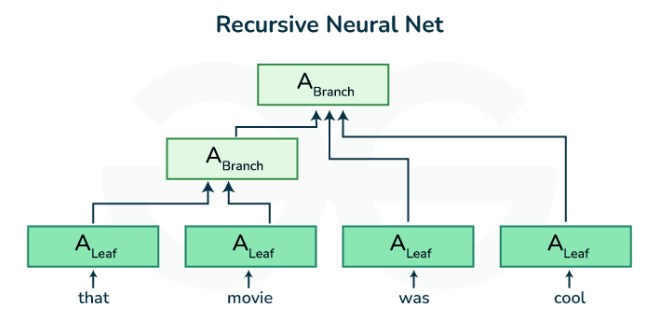

構建這種深度 RNN 的標準方法非常簡單:我們將 RNN 堆疊在一起。給定一個長度序列T,第一個 RNN 產生一個輸出序列,也是長度T. 這些依次構成下一個 RNN 層的輸入。在這個簡短的部分中,我們將說明這種設計模式,并提供一個簡單示例來說明如何編寫此類堆疊 RNN。下面,在 圖 10.3.1中,我們用L隱藏層。每個隱藏狀態對順序輸入進行操作并產生順序輸出。此外,每個時間步的任何 RNN 單元(圖 10.3.1中的白框 )都取決于同一層在前一時間步的值和前一層在同一時間步的值。

圖 10.3.1深度 RNN 的架構。

正式地,假設我們有一個小批量輸入 Xt∈Rn×d(示例數量: n,每個示例中的輸入數量:d) 在時間步 t. 同時step,讓hidden state的 lth隱藏層(l=1,…,L) 是 Ht(l)∈Rn×h(隱藏單元的數量:h) 和輸出層變量是 Ot∈Rn×q(輸出數量: q). 環境Ht(0)=Xt, 的隱藏狀態lth使用激活函數的隱藏層?l計算如下:

(10.3.1)Ht(l)=?l(Ht(l?1)Wxh(l)+Ht?1(l)Whh(l)+bh(l)),

權重在哪里 Wxh(l)∈Rh×h和 Whh(l)∈Rh×h, 連同偏差bh(l)∈R1×h, 是模型參數lth隱藏層。

最終輸出層的計算只是根據最終的隱藏狀態Lth隱藏層:

(10.3.2)Ot=Ht(L)Whq+bq,

重量在哪里Whq∈Rh×q和偏見bq∈R1×q是輸出層的模型參數。

與 MLP 一樣,隱藏層的數量L和隱藏單元的數量h是我們可以調整的超參數。常見的 RNN 層寬度 (h) 在范圍內(64,2056), 和共同深度 (L) 在范圍內(1,8). 此外,我們可以通過將(10.3.1)中的隱藏狀態計算替換為來自 LSTM 或 GRU 的計算來輕松獲得深度門控 RNN。

import torch from torch import nn from d2l import torch as d2l

from mxnet import np, npx from mxnet.gluon import rnn from d2l import mxnet as d2l npx.set_np()

import jax from flax import linen as nn from jax import numpy as jnp from d2l import jax as d2l

import tensorflow as tf from d2l import tensorflow as d2l

10.3.1。從零開始實施

要從頭開始實現多層 RNN,我們可以將每一層視為RNNScratch具有自己可學習參數的實例。

class StackedRNNScratch(d2l.Module): def __init__(self, num_inputs, num_hiddens, num_layers, sigma=0.01): super().__init__() self.save_hyperparameters() self.rnns = nn.Sequential(*[d2l.RNNScratch( num_inputs if i==0 else num_hiddens, num_hiddens, sigma) for i in range(num_layers)])

class StackedRNNScratch(d2l.Module):

def __init__(self, num_inputs, num_hiddens, num_layers, sigma=0.01):

super().__init__()

self.save_hyperparameters()

self.rnns = [d2l.RNNScratch(num_inputs if i==0 else num_hiddens,

num_hiddens, sigma)

for i in range(num_layers)]

class StackedRNNScratch(d2l.Module):

num_inputs: int

num_hiddens: int

num_layers: int

sigma: float = 0.01

def setup(self):

self.rnns = [d2l.RNNScratch(self.num_inputs if i==0 else self.num_hiddens,

self.num_hiddens, self.sigma)

for i in range(self.num_layers)]

class StackedRNNScratch(d2l.Module):

def __init__(self, num_inputs, num_hiddens, num_layers, sigma=0.01):

super().__init__()

self.save_hyperparameters()

self.rnns = [d2l.RNNScratch(num_inputs if i==0 else num_hiddens,

num_hiddens, sigma)

for i in range(num_layers)]

多層正向計算只是逐層進行正向計算。

@d2l.add_to_class(StackedRNNScratch) def forward(self, inputs, Hs=None): outputs = inputs if Hs is None: Hs = [None] * self.num_layers for i in range(self.num_layers): outputs, Hs[i] = self.rnns[i](outputs, Hs[i]) outputs = torch.stack(outputs, 0) return outputs, Hs

@d2l.add_to_class(StackedRNNScratch)

def forward(self, inputs, Hs=None):

outputs = inputs

if Hs is None: Hs = [None] * self.num_layers

for i in range(self.num_layers):

outputs, Hs[i] = self.rnns[i](outputs, Hs[i])

outputs = np.stack(outputs, 0)

return outputs, Hs

@d2l.add_to_class(StackedRNNScratch)

def forward(self, inputs, Hs=None):

outputs = inputs

if Hs is None: Hs = [None] * self.num_layers

for i in range(self.num_layers):

outputs, Hs[i] = self.rnns[i](outputs, Hs[i])

outputs = jnp.stack(outputs, 0)

return outputs, Hs

@d2l.add_to_class(StackedRNNScratch)

def forward(self, inputs, Hs=None):

outputs = inputs

if Hs is None: Hs = [None] * self.num_layers

for i in range(self.num_layers):

outputs, Hs[i] = self.rnns[i](outputs, Hs[i])

outputs = tf.stack(outputs, 0)

return outputs, Hs

例如,我們在時間機器數據集上訓練了一個深度 GRU 模型(與第 9.5 節相同)。為了簡單起見,我們將層數設置為 2。

data = d2l.TimeMachine(batch_size=1024, num_steps=32)

rnn_block = StackedRNNScratch(num_inputs=len(data.vocab),

num_hiddens=32, num_layers=2)

model = d2l.RNNLMScratch(rnn_block, vocab_size=len(data.vocab), lr=2)

trainer = d2l.Trainer(max_epochs=100, gradient_clip_val=1, num_gpus=1)

trainer.fit(model, data)

data = d2l.TimeMachine(batch_size=1024, num_steps=32)

rnn_block = StackedRNNScratch(num_inputs=len(data.vocab),

num_hiddens=32, num_layers=2)

model = d2l.RNNLMScratch(rnn_block, vocab_size=len(data.vocab), lr=2)

trainer = d2l.Trainer(max_epochs=100, gradient_clip_val=1, num_gpus=1)

trainer.fit(model, data)

data = d2l.TimeMachine(batch_size=1024, num_steps=32)

rnn_block = StackedRNNScratch(num_inputs=len(data.vocab),

num_hiddens=32, num_layers=2)

model = d2l.RNNLMScratch(rnn_block, vocab_size=len(data.vocab), lr=2)

trainer = d2l.Trainer(max_epochs=100, gradient_clip_val=1, num_gpus=1)

trainer.fit(model, data)

data = d2l.TimeMachine(batch_size=1024, num_steps=32)

with d2l.try_gpu():

rnn_block = StackedRNNScratch(num_inputs=len(data.vocab),

num_hiddens=32, num_layers=2)

model = d2l.RNNLMScratch(rnn_block, vocab_size=len(data.vocab), lr=2)

trainer = d2l.Trainer(max_epochs=100, gradient_clip_val=1)

trainer.fit(model, data)

10.3.2。簡潔的實現

幸運的是,實現多層 RNN 所需的許多邏輯細節都可以在高級 API 中輕松獲得。我們的簡潔實現將使用此類內置功能。該代碼概括了我們之前在第 10.2 節中使用的代碼,允許明確指定層數而不是選擇單層的默認值。

class GRU(d2l.RNN): #@save

"""The multi-layer GRU model."""

def __init__(self, num_inputs, num_hiddens, num_layers, dropout=0):

d2l.Module.__init__(self)

self.save_hyperparameters()

self.rnn = nn.GRU(num_inputs, num_hiddens, num_layers,

dropout=dropout)

Fortunately many of the logistical details required to implement multiple layers of an RNN are readily available in high-level APIs. Our concise implementation will use such built-in functionalities. The code generalizes the one we used previously in Section 10.2, allowing specification of the number of layers explicitly rather than picking the default of a single layer.

class GRU(d2l.RNN): #@save

"""The multi-layer GRU model."""

def __init__(self, num_hiddens, num_layers, dropout=0):

d2l.Module.__init__(self)

self.save_hyperparameters()

self.rnn = rnn.GRU(num_hiddens, num_layers, dropout=dropout)

Flax takes a minimalistic approach while implementing RNNs. Defining the number of layers in an RNN or combining it with dropout is not available out of the box. Our concise implementation will use all built-in functionalities and add num_layers and dropout features on top. The code generalizes the one we used previously in Section 10.2, allowing specification of the number of layers explicitly rather than picking the default of a single layer.

class GRU(d2l.RNN): #@save

"""The multi-layer GRU model."""

num_hiddens: int

num_layers: int

dropout: float = 0

@nn.compact

def __call__(self, X, state=None, training=False):

outputs = X

new_state = []

if state is None:

batch_size = X.shape[1]

state = [nn.GRUCell.initialize_carry(jax.random.PRNGKey(0),

(batch_size,), self.num_hiddens)] * self.num_layers

GRU = nn.scan(nn.GRUCell, variable_broadcast="params",

in_axes=0, out_axes=0, split_rngs={"params": False})

# Introduce a dropout layer after every GRU layer except last

for i in range(self.num_layers - 1):

layer_i_state, X = GRU()(state[i], outputs)

new_state.append(layer_i_state)

X = nn.Dropout(self.dropout, deterministic=not training)(X)

# Final GRU layer without dropout

out_state, X = GRU()(state[-1], X)

new_state.append(out_state)

return X, jnp.array(new_state)

Fortunately many of the logistical details required to implement multiple layers of an RNN are readily available in high-level APIs. Our concise implementation will use such built-in functionalities. The code generalizes the one we used previously in Section 10.2, allowing specification of the number of layers explicitly rather than picking the default of a single layer.

class GRU(d2l.RNN): #@save

"""The multi-layer GRU model."""

def __init__(self, num_hiddens, num_layers, dropout=0):

d2l.Module.__init__(self)

self.save_hyperparameters()

gru_cells = [tf.keras.layers.GRUCell(num_hiddens, dropout=dropout)

for _ in range(num_layers)]

self.rnn = tf.keras.layers.RNN(gru_cells, return_sequences=True,

return_state=True, time_major=True)

def forward(self, X, state=None):

outputs, *state = self.rnn(X, state)

return outputs, state

選擇超參數等架構決策與10.2 節中的決策非常相似。我們選擇相同數量的輸入和輸出,因為我們有不同的標記,即vocab_size。隱藏單元的數量仍然是 32。唯一的區別是我們現在通過指定 的值來選擇不平凡的隱藏層數量 num_layers。

gru = GRU(num_inputs=len(data.vocab), num_hiddens=32, num_layers=2) model = d2l.RNNLM(gru, vocab_size=len(data.vocab), lr=2) trainer.fit(model, data)

model.predict('it has', 20, data.vocab, d2l.try_gpu())

'it has a small the time tr'

gru = GRU(num_hiddens=32, num_layers=2)

model = d2l.RNNLM(gru, vocab_size=len(data.vocab), lr=2)

# Running takes > 1h (pending fix from MXNet)

# trainer.fit(model, data)

# model.predict('it has', 20, data.vocab, d2l.try_gpu())

gru = GRU(num_hiddens=32, num_layers=2) model = d2l.RNNLM(gru, vocab_size=len(data.vocab), lr=2) trainer.fit(model, data)

model.predict('it has', 20, data.vocab, trainer.state.params)

'it has wo mean the time tr'

gru = GRU(num_hiddens=32, num_layers=2) with d2l.try_gpu(): model = d2l.RNNLM(gru, vocab_size=len(data.vocab), lr=2) trainer.fit(model, data)

model.predict('it has', 20, data.vocab)

'it has and the time travel'

10.3.3。概括

在深度 RNN 中,隱藏狀態信息被傳遞到當前層的下一個時間步和下一層的當前時間步。存在許多不同風格的深度 RNN,例如 LSTM、GRU 或普通 RNN。方便的是,這些模型都可以作為深度學習框架的高級 API 的一部分使用。模型的初始化需要小心。總的來說,深度 RNN 需要大量的工作(例如學習率和裁剪)來確保適當的收斂。

10.3.4。練習

用 LSTM 替換 GRU 并比較準確性和訓練速度。

增加訓練數據以包含多本書。你的困惑度可以降到多低?

在建模文本時,您想結合不同作者的來源嗎?為什么這是個好主意?會出什么問題?

-

神經網絡

+關注

關注

42文章

4776瀏覽量

100952 -

pytorch

+關注

關注

2文章

808瀏覽量

13283

發布評論請先 登錄

相關推薦

基于遞歸神經網絡和前饋神經網絡的深度學習預測算法

PyTorch教程8.1之深度卷積神經網絡(AlexNet)

使用PyTorch構建神經網絡

遞歸神經網絡是循環神經網絡嗎

遞歸神經網絡與循環神經網絡一樣嗎

rnn是遞歸神經網絡還是循環神經網絡

PyTorch神經網絡模型構建過程

遞歸神經網絡的實現方法

遞歸神經網絡和循環神經網絡的模型結構

PyTorch教程-10.3. 深度遞歸神經網絡

PyTorch教程-10.3. 深度遞歸神經網絡

評論