很多正在入門或剛?cè)腴TTensorFlow機(jī)器學(xué)習(xí)的同學(xué)希望能夠通過自己指定圖片源對(duì)模型進(jìn)行訓(xùn)練,然后識(shí)別和分類自己指定的圖片。但是,在TensorFlow官方入門教程中,并無明確給出如何把自定義數(shù)據(jù)輸入訓(xùn)練模型的方法。現(xiàn)在,我們就參考官方入門課程《Deep MNIST for Experts》一節(jié)的內(nèi)容(傳送門:https://www.tensorflow.org/get_started/mnist/pros),介紹如何將自定義圖片輸入到TensorFlow的訓(xùn)練模型。

在《Deep MNISTfor Experts》一節(jié)的代碼中,程序?qū)ensorFlow自帶的mnist圖片數(shù)據(jù)集mnist.train.images作為訓(xùn)練輸入,將mnist.test.images作為驗(yàn)證輸入。當(dāng)學(xué)習(xí)了該節(jié)內(nèi)容后,我們會(huì)驚嘆卷積神經(jīng)網(wǎng)絡(luò)的超高識(shí)別率,但對(duì)于剛開始學(xué)習(xí)TensorFlow的同學(xué),內(nèi)心可能會(huì)產(chǎn)生一個(gè)問號(hào):如何將mnist數(shù)據(jù)集替換為自己指定的圖片源?譬如,我要將圖片源改為自己C盤里面的圖片,應(yīng)該怎么調(diào)整代碼?

我們先看下該節(jié)課程中涉及到mnist圖片調(diào)用的代碼:

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=True)

batch = mnist.train.next_batch(50)

train_accuracy = accuracy.eval(feed_dict={x: batch[0], y_: batch[1], keep_prob: 1.0})

train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

print('test accuracy %g' % accuracy.eval(feed_dict={x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0}))

對(duì)于剛接觸TensorFlow的同學(xué),要修改上述代碼,可能會(huì)較為吃力。我也是經(jīng)過一番摸索,才成功調(diào)用自己的圖片集。

要實(shí)現(xiàn)輸入自定義圖片,需要自己先準(zhǔn)備好一套圖片集。為節(jié)省時(shí)間,我們把mnist的手寫體數(shù)字集一張一張地解析出來,存放到自己的本地硬盤,保存為bmp格式,然后再把本地硬盤的手寫體圖片一張一張地讀取出來,組成集合,再輸入神經(jīng)網(wǎng)絡(luò)。mnist手寫體數(shù)字集的提取方式詳見《如何從TensorFlow的mnist數(shù)據(jù)集導(dǎo)出手寫體數(shù)字圖片》。

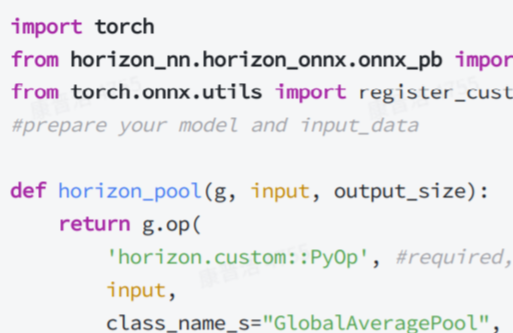

將mnist手寫體數(shù)字集導(dǎo)出圖片到本地后,就可以仿照以下python代碼,實(shí)現(xiàn)自定義圖片的訓(xùn)練:

#!/usr/bin/python3.5

# -*- coding: utf-8 -*-

import os

import numpy as np

import tensorflow as tf

from PIL import Image

# 第一次遍歷圖片目錄是為了獲取圖片總數(shù)

input_count = 0

for i in range(0,10):

dir = './custom_images/%s/' % i # 這里可以改成你自己的圖片目錄,i為分類標(biāo)簽

for rt, dirs, files in os.walk(dir):

for filename in files:

input_count += 1

# 定義對(duì)應(yīng)維數(shù)和各維長度的數(shù)組

input_images = np.array([[0]*784 for i in range(input_count)])

input_labels = np.array([[0]*10 for i in range(input_count)])

# 第二次遍歷圖片目錄是為了生成圖片數(shù)據(jù)和標(biāo)簽

index = 0

for i in range(0,10):

dir = './custom_images/%s/' % i # 這里可以改成你自己的圖片目錄,i為分類標(biāo)簽

for rt, dirs, files in os.walk(dir):

for filename in files:

filename = dir + filename

img = Image.open(filename)

width = img.size[0]

height = img.size[1]

for h in range(0, height):

for w in range(0, width):

# 通過這樣的處理,使數(shù)字的線條變細(xì),有利于提高識(shí)別準(zhǔn)確率

if img.getpixel((w, h)) > 230:

input_images[index][w+h*width] = 0

else:

input_images[index][w+h*width] = 1

input_labels[index][i] = 1

index += 1

# 定義輸入節(jié)點(diǎn),對(duì)應(yīng)于圖片像素值矩陣集合和圖片標(biāo)簽(即所代表的數(shù)字)

x = tf.placeholder(tf.float32, shape=[None, 784])

y_ = tf.placeholder(tf.float32, shape=[None, 10])

x_image = tf.reshape(x, [-1, 28, 28, 1])

# 定義第一個(gè)卷積層的variables和ops

W_conv1 = tf.Variable(tf.truncated_normal([7, 7, 1, 32], stddev=0.1))

b_conv1 = tf.Variable(tf.constant(0.1, shape=[32]))

L1_conv = tf.nn.conv2d(x_image, W_conv1, strides=[1, 1, 1, 1], padding='SAME')

L1_relu = tf.nn.relu(L1_conv + b_conv1)

L1_pool = tf.nn.max_pool(L1_relu, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

# 定義第二個(gè)卷積層的variables和ops

W_conv2 = tf.Variable(tf.truncated_normal([3, 3, 32, 64], stddev=0.1))

b_conv2 = tf.Variable(tf.constant(0.1, shape=[64]))

L2_conv = tf.nn.conv2d(L1_pool, W_conv2, strides=[1, 1, 1, 1], padding='SAME')

L2_relu = tf.nn.relu(L2_conv + b_conv2)

L2_pool = tf.nn.max_pool(L2_relu, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

# 全連接層

W_fc1 = tf.Variable(tf.truncated_normal([7 * 7 * 64, 1024], stddev=0.1))

b_fc1 = tf.Variable(tf.constant(0.1, shape=[1024]))

h_pool2_flat = tf.reshape(L2_pool, [-1, 7*7*64])

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1)

# dropout

keep_prob = tf.placeholder(tf.float32)

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob)

# readout層

W_fc2 = tf.Variable(tf.truncated_normal([1024, 10], stddev=0.1))

b_fc2 = tf.Variable(tf.constant(0.1, shape=[10]))

y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2

# 定義優(yōu)化器和訓(xùn)練op

cross_entropy = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=y_, logits=y_conv))

train_step = tf.train.AdamOptimizer((1e-4)).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

print ("一共讀取了 %s 個(gè)輸入圖像, %s 個(gè)標(biāo)簽" % (input_count, input_count))

# 設(shè)置每次訓(xùn)練op的輸入個(gè)數(shù)和迭代次數(shù),這里為了支持任意圖片總數(shù),定義了一個(gè)余數(shù)remainder,譬如,如果每次訓(xùn)練op的輸入個(gè)數(shù)為60,圖片總數(shù)為150張,則前面兩次各輸入60張,最后一次輸入30張(余數(shù)30)

batch_size = 60

iterations = 100

batches_count = int(input_count / batch_size)

remainder = input_count % batch_size

print ("數(shù)據(jù)集分成 %s 批, 前面每批 %s 個(gè)數(shù)據(jù),最后一批 %s 個(gè)數(shù)據(jù)" % (batches_count+1, batch_size, remainder))

# 執(zhí)行訓(xùn)練迭代

for it in range(iterations):

# 這里的關(guān)鍵是要把輸入數(shù)組轉(zhuǎn)為np.array

for n in range(batches_count):

train_step.run(feed_dict={x: input_images[n*batch_size:(n+1)*batch_size], y_: input_labels[n*batch_size:(n+1)*batch_size], keep_prob: 0.5})

if remainder > 0:

start_index = batches_count * batch_size;

train_step.run(feed_dict={x: input_images[start_index:input_count-1], y_: input_labels[start_index:input_count-1], keep_prob: 0.5})

# 每完成五次迭代,判斷準(zhǔn)確度是否已達(dá)到100%,達(dá)到則退出迭代循環(huán)

iterate_accuracy = 0

if it%5 == 0:

iterate_accuracy = accuracy.eval(feed_dict={x: input_images, y_: input_labels, keep_prob: 1.0})

print ('iteration %d: accuracy %s' % (it, iterate_accuracy))

if iterate_accuracy >= 1:

break;

print ('完成訓(xùn)練!')

上述python代碼的執(zhí)行結(jié)果截圖如下:

對(duì)于上述代碼中與模型構(gòu)建相關(guān)的代碼,請(qǐng)查閱官方《Deep MNIST for Experts》一節(jié)的內(nèi)容進(jìn)行理解。在本文中,需要重點(diǎn)掌握的是如何將本地圖片源整合成為feed_dict可接受的格式。其中最關(guān)鍵的是這兩行:

# 定義對(duì)應(yīng)維數(shù)和各維長度的數(shù)組

input_images = np.array([[0]*784 for i in range(input_count)])

input_labels = np.array([[0]*10 for i in range(input_count)])

它們對(duì)應(yīng)于feed_dict的兩個(gè)placeholder:

x = tf.placeholder(tf.float32, shape=[None, 784])

y_ = tf.placeholder(tf.float32, shape=[None, 10])

這樣一看,是不是很簡單?

-

神經(jīng)網(wǎng)絡(luò)

+關(guān)注

關(guān)注

42文章

4776瀏覽量

100938 -

數(shù)據(jù)集

+關(guān)注

關(guān)注

4文章

1208瀏覽量

24747 -

tensorflow

+關(guān)注

關(guān)注

13文章

329瀏覽量

60558

原文標(biāo)題:如何用TensorFlow訓(xùn)練和識(shí)別/分類自定義圖片

文章出處:【微信號(hào):Imgtec,微信公眾號(hào):Imagination Tech】歡迎添加關(guān)注!文章轉(zhuǎn)載請(qǐng)注明出處。

發(fā)布評(píng)論請(qǐng)先 登錄

相關(guān)推薦

基于YOLOv8實(shí)現(xiàn)自定義姿態(tài)評(píng)估模型訓(xùn)練

LabVIEW自定義控件

labview如何在自定義里修改儀表控件的指針?

RTWconfigurationguide基于模型設(shè)計(jì)—自定義目

如何在TensorFlow2里使用Keras API創(chuàng)建一個(gè)自定義CNN網(wǎng)絡(luò)?

如何在移動(dòng)設(shè)備上訓(xùn)練和部署自定義目標(biāo)檢測模型

自定義視圖組件教程案例

大型語言模型(LLM)的自定義訓(xùn)練:包含代碼示例的詳細(xì)指南

自定義算子開發(fā)

如何將自定義邏輯從FPGA/CPLD遷移到C2000?微控制器

如何將自定義圖片輸入到TensorFlow的訓(xùn)練模型

如何將自定義圖片輸入到TensorFlow的訓(xùn)練模型

評(píng)論